By far and away, this is the most asked question that I get from anyone diving into game development.

Be it a start up team or a small group of students, every project needs to decide their tech stack. It’s definitely a hard question, but the good news is that there are a number of options.

Below, I’ve listed a selection of game engines that are, in my opinion, a good place to start.

Unreal Engine

The first engine that is often spoke of for AAA gaming, animation and visual effects is the Unreal Engine by Epic Games. You should consider this engine the “go to” for AAA style video game development. It’s a tank. It strength is making “realism” for third person, first person or strategy games. Major game studios use it to scale teams of 100’s of people.

Flooded with money, Epic is the Mongol Horde of the Game Engine world. From their marketing assault, it is clear they wish to own cinematic production in Hollywood, or really anything where there’s a camera and subject. With real time workflows being so disruptive, they might just win. Many, if not all, of the major entertainment computer graphics firms have integrated Unreal, or will integrate Unreal, into their pipeline. Software has eaten film production, and Unreal is the mouth.

Unreal will most likely be the dominant player in many real time interactive experiences from gaming, to architecture, virtual production, and many other fields that require high fidelity graphics.

Pro

If you are interested in creating AAA quality games within the fairly known design paradigms of the console gaming world, then this is a good choice. Even if you don’t wish to use it, you might find yourself sucked into a team or project that is dependent on it.

Unreal has made real strides in making programming accessible to artists with their blueprinting system. This node based scripting has been a good way to learn, and an even better way to get the designers more “hands on” in the system.

If you are visual effects artist, or a feature film animator, it is also a very good choice to think about picking up. It’s sort of becoming industry standard. Every movie shop is looking for people skilled in it’s use right now.

Con

Much like Microsoft for business and personal computing was in the 90’s, Unreal will most likely be the corporate operating system for real time 3D content development. It will grow relentlessly, and probably won’t listen to the little voices of the independents.

If you are Indie developer, interactive artist, data visualizer, or are dependent on rapid prototyping, there are others engines that might suit a bit better.

For Me

Since most of my work is entertainment industry facing, Unreal is in the “must learn” category. The animation systems are robust and powerful, and there is no arguing with the asset development pipeline, especially with the acquisition of quixel megascans, the development of Metahumans, and the development of Nanite in Unreal 5.0. It’s not without its frustrations, which for me, comes down to the material and lighting rebuild loading times.

I don’t use it to prototype unless I am experimenting in animation systems. It’s size makes it hard to work with Github, and there is very little thinking or support for blockchain networks or AI models. They recently launched a python interpreter, but I’ve barely found a support network for developing content with it yet.

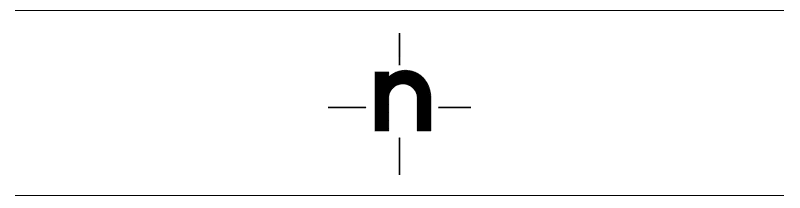

Unity

Unity applications currently account for three billion installs around the world. As a 3d interactive development package, it is THE dominant player. It is also the major player in independent and mid-tier game development. It is fairly standard in interactive design and commercial application development.

That means, if you are an independent game developer, this has been your engine for a while, for Unity has the controlling interest to this sector. If you are an interactive developer at an agency or working with a location based experience of some sort, this is also most likely the engine you would use.

Everything in Unity is a Class. You make an asset and attach a script, and then, it’s interactive. By making it a Prefab, you can use it again and again. This is the core value of game engines, and this is something that Unity has done very well to democratize the technology to creatives. It uses C#, which has a lot of similarities to Java, which can be somewhat difficult to new programmers, but easier to pick up with those with a touch of development experience. Unity, probably inspired by Epic’s blueprints, has begun to integrate Bolt, their own visual scripting system. (Though, I have not used it.)

They are not without their movie making ambitions though. A recent deal with New Zealand based Weta, shows their claim to be in the visual effects and narrative content world. I watch with a keen eye to see what happens.

Unity is also a private company, who have a weird licensing system when you actually start making real money. Since their focus is more diverse, (larger interactive market vs a AAA game market) they tend to have plugins for some of the more innovative trends like Augmented Reality, or Machine Learning. Unity also is forming initiatives with auto companies, technology visualization initiatives, and developing an ecosystem of educational content. It’s clear they are positioning themselves as an interactive artist’s tool, more than just a game engine. There will always be games developed with it, but many other things will be built with it as well.

Pro

Wide adoption of use. Unity can really be used to do a lot of things, and is a bit of a “swiss army knife” for interactive and independent game experiences. There is a very active community and the learning resources on youtube and educational communities is extremely rich in content.

Con

Unity’s corporate-ness is beginning to show. They are clearly a little weary of Epic’s dominance of some sectors, and are also a little “nickle-dimey” on their pricing models. Yes, the engine is free for the most part, but the upgrades for AR and machine learning, which are priced at 50/month, are a little worrisome.

For Me

Most of my experience is focused on the Unreal Engine, but I have recently opened my brain to more development work with Unity.

I’m also in the middle of a reinforcement learning obsession, to which I’m interested in the accessibility of Unity’s Machine Learning Agents. Stay tuned.

Godot

As an advocate of open source, this engine is a darling of mine. It’s the “Blender of the game engine world.” There is a small team of developers, led by the remarkably talented Juan Linietsky. With a passionate open source community rallying behind it, it is gaining traction at an astounding rate. Right now, it is best for 2d games, though recently their foray into 3d, and the projected development of the Vulcan render system will most likely change that.

They use custom coding language called GDScript, which much like python, is a declarative and easy to read language. Relative to Unity and Unreal, Godot has a much smaller base, but that base is extremely rabid.

I feel that Godot is uniquely positioned when it comes to innovative gaming and decentralized development. When we start distributing our networks and commerce, are we really going to cut in Unreal or Unity?

Godot, as open source, is the natural choice for teams that are looking to create autonomous or community driven game systems. The community, not a centralized player, will shape it’s functional use. (Whatever that turns out to be.)

Pro

A great open source community that supports the development learning and creation with it. The more the community grows and adapts, the more robust and creative the engine becomes.

GDScript is also very much like Python, and it is a declarative code. This means that you can look at it, squint a bit, and for the most part, read what is happening. This makes getting very simple stuff up and going in the engine very quickly.

Con

Open Source software also can have some sharp edges. Fancy, well funded, software development always tends to look polished, even if the usiblity is frustrating. Open source tends to be the opposite. Functionality is a premium, but that doesn’t always mean the usability or interface has it quite figured out.

The 3d content in Godot is still a little early. As a result, there aren’t as many support systems in place.

For Me

I had a brief and romantic affair with Godot as I played with a number of 2d pixel art prototypes. For learning game development, it is one of my favorite, but for competing with the main stream big boys above, they are a few years away.

O3DE

There once was a company that sold online books, that turned into a global juggernaut of a tech company. Amazon has decided to get into the high-end engine game, and their entry, while a bit rough and young, may be an interesting entrance to the space.

Game developer, Crytech, who were facing financial difficulties, sold their CryEngine to Amazon several years ago. Amazon now had a real time renderer that looked amazing, but the accessibility of the engine left much to desire if it were to be a main stream consumer product. They built up their own GUI and UX on top and renamed the engine, Lumberyard. But, recently, Amazon partnered with the Linux Foundation to open source the engine. With it, they rechristened it “O3DE.”

I have had very little interaction with it. In honesty, I just downloaded the update and have begun poking at it. I mention it because I have been watching the development of Lumberyard for a while, and I really can not discount the efforts of Amazon as they move into the gaming space.

In a lot of ways the engine looks and feels like Unreal or Unity, but Amazon is designing for the future. Without feeling the obligations of supporting the technical needs of today, they are trying to keep the engine more modular, instituting a system called “gems”

These will allow developers to pick and choose the kind of plug ins they need for the experience they are building. In the release I downloaded, they offered motion matching systems, which is surprising since neither of the other two major engines have offered it out of the box. It’s a forward looking move.

There haven’t been a lot of times where Amazon have seriously entered a space, and in a short time made themselves a major competitor. Having AWS so readily available to integrate into the engine, plus the fact they have push firmly into the open source route, shows that they have major plans to position themselves in whatever the metaverse they seem to think is coming.

It’s not ready for prime time yet, but in 18 months or so, they might be battle ready.

The Gateway Engines

For the “just getting started” type, here are some of my recommendations.

I tried game engines a number of times. I bounced out of Unity when I first tried. I struggled through Xcode development with cocos, barely understanding the process. I then tried Love2D for a small time, but grew tired of Lua. I was lost in the world of game engines.

Then, I discovered Construct 2. I used it to build a Metroidvania style game called “Agent Kickback.” It was the first time I felt like I could build the entire thing — on my own.

The software is built for deploying an HTML5 engine, which allowed fast loading times in browser based content — but the real value was the visual coding interface. In construct, you snap functions together like lego pieces.This was the first time I was able to get my mind around concepts like functions, variables, classes, optimization and state machines. It was a huge unlock.

After this point, I returned to the industry to use engines like Unity and Unreal, and found that I had much more confidence and direction.

I have heard that Game Maker is also of a similar approachability, but I have never used it. (It’s popularity amongst some students makes me include it here.) These engines are a great way to get into game engines. Essentially, they have a low enough technical overhead to let in the artists.

Once you are in, however, you get it. And when you have reached a point that you want to actually build something a bit more than a starter level, you move on. In that case, move up to one of the engines I have listed above.

And What about the Web?

The web is also a wonderful place to play with 3d and game engines.

There are a lot of Javascript frameworks. For example, Babylon.js is an open source engine from a nifty team at Microsoft,. Playcanvas is a more engine-y looking interface for developing web based interactive work, which was recently bough by Snap. Another favorite of mine is A Frame, a framework that uses HTML tags to place 3d objects, add a little animation, and even do VR!

The world these engines render, is built on a wonderful little library called three.js, a javascript core that actually renders 3d objects in the browser. Chances are you have seen something around the internet, and I’m pretty sure three.js was behind it.

While these frameworks show a lot of promise, (I, personally, love spending time with some of these programs) my feeling is that they are very much in the early days of development. Could the web be used for high fidelity real time graphics and rendering? Well, that debate might best be suited for another post.

As always, I welcome feedback, thoughts or suggestions. I am always open to discuss with other developers and educators about any of the things I list in my writing. You can always reach out to me on twitter @nyewarburton, my DM’s are always open.

Thanks for reading, and happy game making!

Links and Resources

Construct Game Engine

Game Maker Engine

Three.js

A Frame

Babylon.js

PlayCanvas